Aura Case Study

AURA is a digital wellbeing tool that helps people feel safer on social media. As an overlay, it acts as a protective layer that blocks unwanted content, allows users to curate their feed, and provides calming tools that help users take control and emotionally regulate—all without forcing them to completely disconnect.

Executive Summary

Problem: Social media algorithms prioritize engagement over user wellbeing, exposing users to harmful content with inadequate moderation tools. Users need better control over their emotional safety online.

Solution: AURA is a digital wellbeing tool that acts as a protective layer over existing social platforms, giving users proactive control through personalized content filtering, Calm Mode for an emotional reset, and real-time boundary protection.

Outcome: Through user testing, AURA successfully demonstrated that proactive design reduces harm. Users felt more in control and emotionally safe. Key insights revealed the importance of supportive language, simplified UI, and designing for emotional context—not just content preferences.

Owning the End-to-End Experience

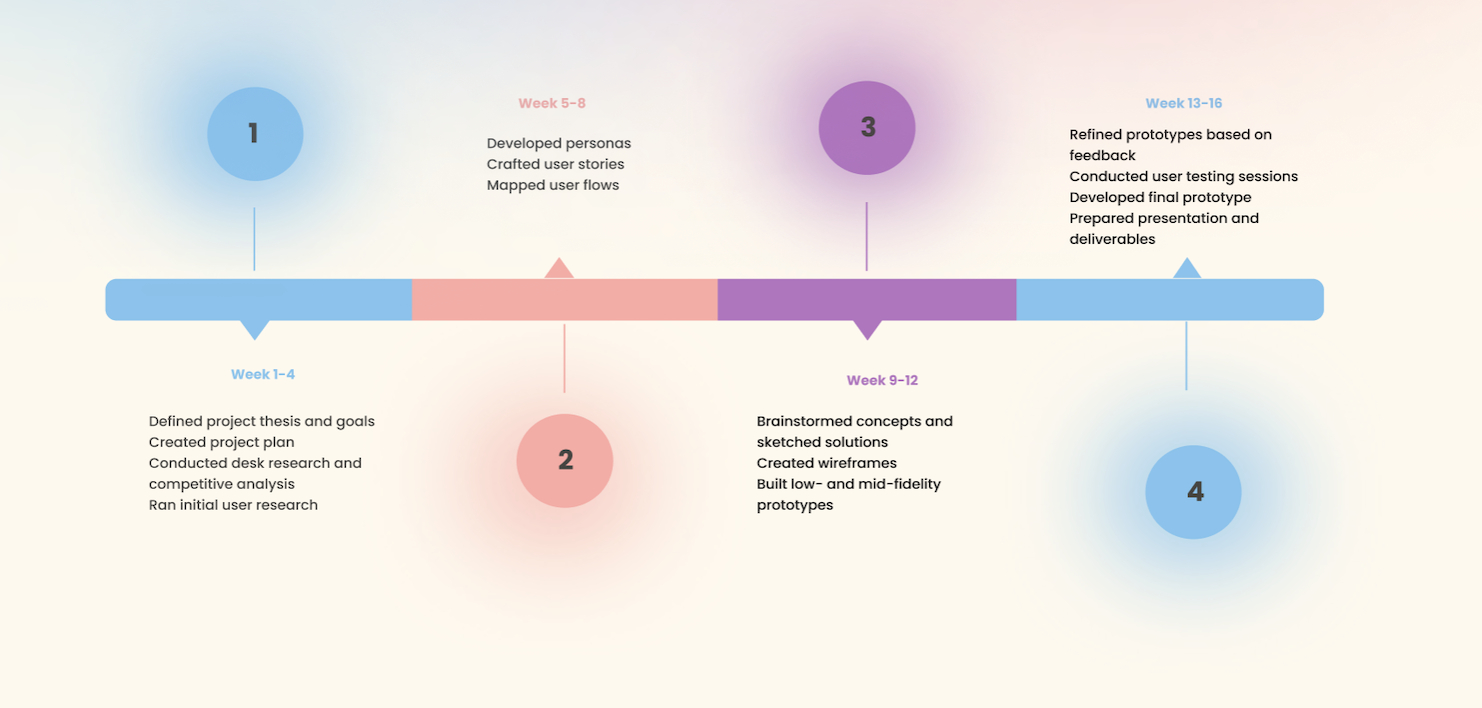

As the product designer, I designed an end-to-end product experience over the course of 16 weeks, grounding feature decisions in behavioral and emotional user insights. My responsibilities included:

Conducting research and gathering behavioral and emotional user insights

Developing the design strategy and guiding principles

Designing prototypes and onboarding flows

Conducting usability testing and synthesizing feedback

Uncovering The Problem

The Social Media Wellbeing Crisis

Social media algorithms care more about engagement than wellbeing. They expose users to harmful content repeatedly, and existing moderation tools don't work well enough.

The core issue: Users can't meaningfully control what they see. They want emotional safety, but social media platforms make that nearly impossible to achieve.

Initial Research

What I Looked At

I studied major platforms (Instagram, TikTok, X), various academic sources related to algorithms and social media usage, etc to understand how social media platforms deliver and moderate content.

What I Found

1. Algorithms prioritize popularity over user preferences

2. Content controls are buried in confusing menus

3. Users see the same upsetting content over and over

4.Platforms profit from engagement, not user wellbeing

Real Stories from Real Users

I surveyed and interviewed users about their social media habits, emotional responses, and what they wish existed.

100% use social media multiple times daily

50% feel overwhelmed by their feed

60% say moderation tools don't work

Key Insights:

Users want control over when and what type of content they see, based on their mood

They value proactive protection over reactive filtering

Emotional safety matters more than they expected

What This Meant: Users don't want to quit social media—they want it to work with them, not against them. They need tools that understand their emotional state, not just their interests.

The Question

How might we design a social media experience that protects wellbeing, respects boundaries, and promotes calm over chaos?

Meeting the People Behind the Problem

Through my research, two distinct user types emerged—each with different experience levels but the same core need: emotional safety and meaningful control over their social media experience.

Jami, 53 - New to Social Media

Jami joined social media to connect with family but quickly felt overwhelmed. She's intimidated by complex settings and wants simple controls.

What she needs:

Protection from upsetting content before it appears

Clear, simple tools to customize her feed

A calm, non-judgmental experience

What frustrates her:

Algorithms showing things she doesn't want to see

Can't find the right settings

Feels overwhelmed and confused

Maya, 28 - The Intentional Curator

Maya uses social media daily for design inspiration and networking. She's experienced but frustrated—unwanted content breaks her focus and trust.

What she needs:

Proactive filtering that actually works

Wellness features for stress management

A feed she can truly curate herself

What frustrates her:

Still sees upsetting content despite using filters

Current tools feel reactive and inconsistent

Polarizing posts cloud her carefully curated feed

Designing The Solution

Key Design Decisions

1.AURA as an Overlay, Not a Standalone App

Instead of creating another social platform, AURA works as a protective layer over existing apps. This means users don't have to abandon the platforms they already use—AURA just makes them safer.

Why this matters: Users aren't asking to leave social media. They want to stay connected but feel protected. An overlay approach meets them where they are.

2. Proactive Onboarding: Set Boundaries Early

Instead of waiting for users to encounter harmful content, AURA asks them to set preferences and boundaries during onboarding. This prevents problems before they happen.

Why this matters: Reactive moderation forces users to experience harm first, then filter. Proactive design protects from the start, respecting user agency while providing immediate emotional safety.

3. Calm Mode as a Core Feature

Calm Mode isn't just an add-on—it's central to AURA's value. Users can switch to soothing content when stressed, then return to their regular feed when ready. It's designed for horizontal scrolling to feel intentional, not like endless passive scrolling.

Why this matters: Users don't just want topic filters—they want their feed to match their emotional state. The same person needs different content when stressed versus energized.

What Calm Mode Includes

When activated, Calm Mode delivers a cohesive package of interventions designed to reduce stress and promote real-time emotional regulation:

UI adjustments: Simplified visuals, calmer colors, and larger typography reduce cognitive load and sensory overwhelm.

Guided breathing exercises: Flowing visuals guide users step-by-step, helping them regulate breathing and regain calm quickly.

Curated calm content: Personalized content selections provide users with what they need to feel grounded and supported.

Horizontal scrolling: Replaces vertical feeds to interrupt doomscroll habits and create a restorative browsing experience.

Testing , Learning, Iterating

I tested AURA with 5 participants in 30-45 minute Zoom sessions. They walked through onboarding, used Calm Mode, and managed their feed.

What I Changed Based on Feedback

1. Made AURA's Purpose Crystal Clear

Problem: Users didn't understand what AURA did or how to use it

Fix: Added clear feature highlights to onboarding (content blocking, Calm Mode, personalization.

2. Softened the Language

Problem: Notifications felt judgmental ("Take a pause" → controlling)

Fix: Changed to questions that offer choice ("Would you like a moment to reset?")

3. Simplified Calm Mode

Problem: UI felt cluttered and confusing

Fix: Redesigned for horizontal scrolling, cleaner layout, simple toggle

Reflection

This project strengthened my understanding of trauma-informed UX, research-driven design, and creating experiences that support emotional wellbeing. I learned how to translate behavioral insights into actionable design decisions and validate solutions with real users. Here are the key takeaways and what I would explore next:

Design for emotions, not just content Users don't just want topic filters—they want their feed to match their emotional state. The same person wants different content when stressed vs. energized.

Simple ≠ less control Users want power, not complexity. Thoughtful defaults + clear options = meaningful control without overwhelm.

Words matter In wellbeing design, tone is everything. What feels "helpful" to designers can feel "controlling" to users. Always offer choices, never commands.

Details define trust Small things (scroll direction, notification timing, UI density) massively impact whether users feel safe or stressed.

Next Steps

Longitudinal Impact Study

Measure Aura’s effect on mental health over time on users of different social media platforms.

Continue Iterating on Design

Improve UI and add micro-interactions for emotional reassurance.

Interview Additional Experts

in content moderation and wellbeing to validate approach.